Agentic AI is the most significant shift in how businesses operate since cloud software. Here's what it actually is, why most early attempts fall short, and how to think about it before you spend a dollar.

Key takeaways

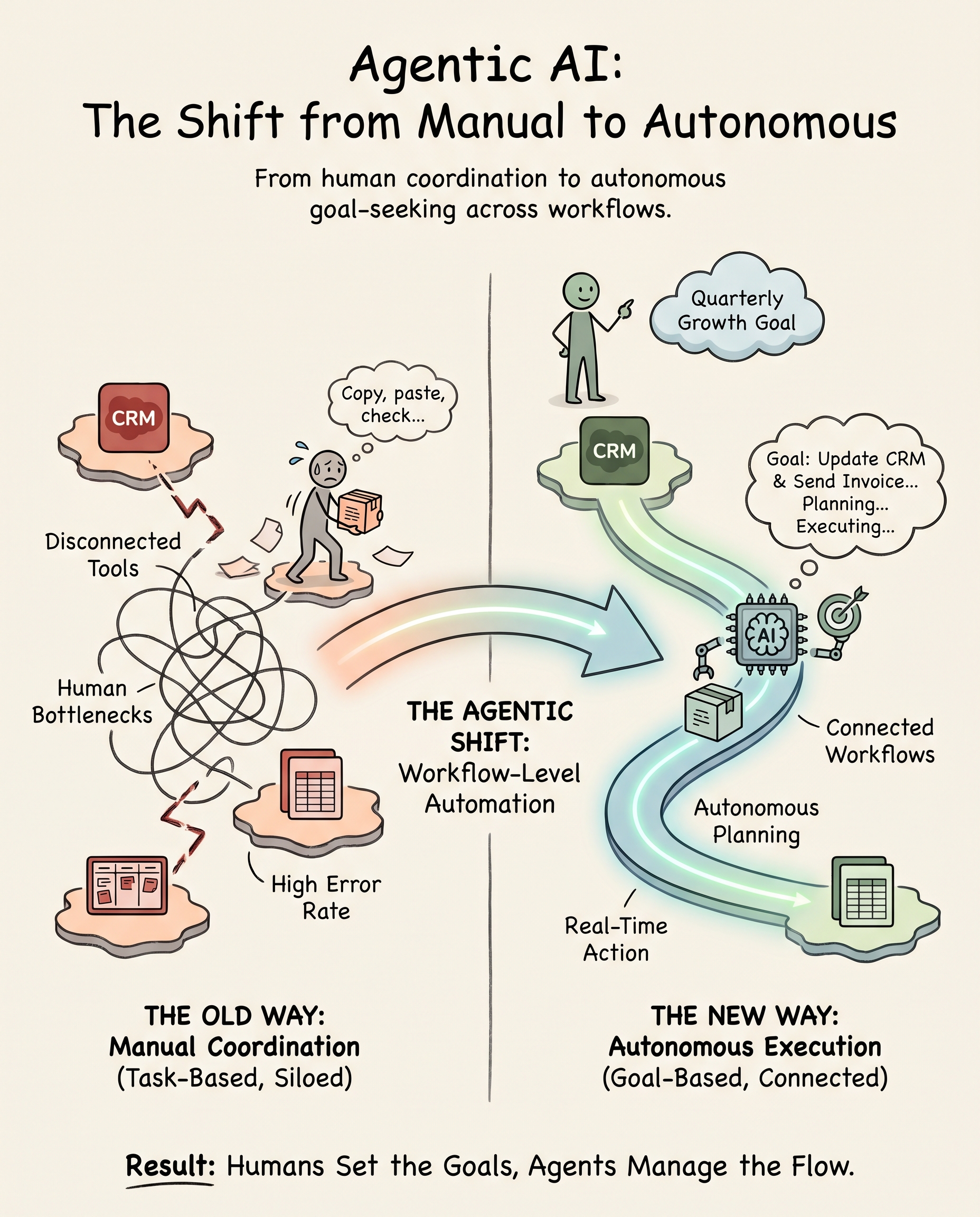

- Agentic AI refers to autonomous systems that plan, reason, and act across tools and workflows to achieve goals with minimal human oversight, which is a fundamentally different category from generative AI.

- According to a PwC survey of 300 senior executives, 79% say AI agents are already being adopted inside their companies, with 66% reporting measurable productivity gains where adoption has taken hold.

- The organizations seeing the highest return are not the ones with the most tools. They're the ones that mapped their workflows before they bought anything.

In 1913, Henry Ford didn't invent the automobile. He invented the moving assembly line, a system where each station did one thing, passed its output to the next, and the whole machine coordinated without a foreman standing between every step. Output multiplied. Labor costs per unit fell. Competitors who kept building cars one at a time couldn't close the gap.

We're at a structurally similar moment with agentic AI for business. The technology isn't new in the way a gadget is new. It's a new organizational principle: work that used to require a human to initiate, route, and complete each step can now be handled by systems that reason about the goal and coordinate the steps themselves. The businesses that understand this first, and redesign accordingly, will be very difficult to catch.

Most leaders I speak with know something has shifted. What they're less clear on is what agentic AI actually is, how it differs from the AI tools they've already tried, and what separates a genuine deployment from a well-marketed demo. That's what this article is for.

What agentic AI for business actually means

AWS defines agentic AI as an autonomous AI system that can act independently to achieve pre-determined goals. Thomson Reuters frames it as AI that can plan and execute complex tasks across multiple systems, making decisions, using various tools and APIs, and performing sequences of actions without continuous human guidance. The University of Cincinnati distills it to three hallmarks: autonomy, reasoning, and adaptable planning.

Put those together in plain terms: a generative AI tool responds when you ask it something. An agentic AI system pursues a goal. You tell it what you want accomplished. It figures out the steps, uses the tools available to it, monitors its own progress, and adjusts when conditions change. The human is in the loop at the level of goals and oversight, not at the level of every individual task.

MIT Sloan adds an important nuance: the most capable agentic systems involve multiple specialized agents coordinating together, a reasoning agent, a retrieval agent, an execution agent, each with narrow expertise, orchestrated toward a shared outcome. This is less like a single smart assistant and more like a well-run team where each member knows their role.

For your business, the practical distinction is this: generative AI makes individuals faster at tasks they were already doing manually. Agentic AI removes entire categories of manual work from the human queue entirely. The gap in organizational output between those two states is significant, and it compounds over time.

Why the tools most businesses have tried haven't worked

The most common entry point for AI in small and mid-sized businesses is a subscription: ChatGPT Plus for the team, an AI feature bundled into the CRM, a writing assistant built into the project management tool. These are generative AI features, not agentic systems. They augment individual tasks within a workflow. They don't connect the workflows themselves.

The result is a familiar frustration. Someone uses AI to draft a proposal faster. Someone else uses it to summarize a meeting. A third person uses it to write a job post. Each of those is a real productivity gain for one person on one task. But the data still doesn't flow from the CRM to the proposal to the project record automatically. The handoffs still require a human. The administrative overhead is still there, just slightly compressed. You've made individuals marginally faster inside a broken system, which is meaningfully different from fixing the system.

The second approach I see often is what I'd call the ambitious pilot: a company hires a contractor or buys an enterprise AI platform to build something specific, a chatbot for customer service, an AI that scores inbound leads. These pilots sometimes work well in isolation. But they stall when it comes time to connect them to the surrounding workflow. The chatbot can answer common questions, but it can't update the CRM record, create a follow-up task, and flag the account for review without a human bridging those systems. The AI sits at the edge of the workflow rather than running through it. Most AI projects fail for exactly this reason: the technology was real, but the workflow underneath it wasn't mapped or connected.

A third pattern is buying an "agentic" platform because the category is attracting serious attention right now. MarketsandMarkets projects the agentic AI market will grow from $7.06 billion in 2025 to $93.2 billion by 2032, a 44.6% compound annual growth rate. That kind of growth attracts vendors, and not all of them are selling what the label says. A platform with an "agent" feature that still requires a human to trigger every action, review every output, and manually push results to the next system is not agentic AI. It's generative AI with better marketing. Buying it doesn't change your operational architecture. It just changes your invoice.

The reframe: you don't have an AI problem, you have a workflow connectivity problem

Here's where I'd push back on how most leaders frame this decision. The question isn't "which agentic AI tool should we buy?" That question skips straight to the answer before diagnosing the actual constraint.

The constraint, in almost every small and mid-sized business I've seen, is that the workflows themselves are undocumented and disconnected. Your CRM doesn't talk to your project management tool. Your accounting software doesn't surface margin data into your operations dashboard. Your support inbox triggers a human response, not a system response. Agentic AI can automate across those boundaries, but only if the boundaries are visible. You can't automate what you haven't mapped.

AI rewards commitment, not impatience. That's a framing I keep returning to, and it applies here. The organizations seeing the highest ROI from agentic systems are not the ones that moved fastest to deploy. They're the ones that did the unglamorous work first: documenting their highest-volume manual processes, identifying where data moves by human hand between systems, and establishing which handoffs are costing the most in labor hours per week.

A Google Cloud study of executives found that 13% qualify as "agentic AI early adopters": organizations dedicating 50% or more of their future AI budget to agents and embedding them deeply across operations. Among that group, 88% report ROI from AI in at least one use case, versus 74% across all organizations. The gap isn't explained by the tools they chose. It's explained by how they approached the work before the tools were selected.

For your business, the reframe is this: agentic AI is not a product decision. It's an organizational design decision. The tool comes after you know which workflow you're redesigning and why.

How to think about agentic AI before you buy anything

I'd suggest a straightforward diagnostic before any platform evaluation begins. It has three steps, and none of them require a vendor.

Step 1: Find the highest-volume manual handoff in your business. This is the moment in a workflow where a human picks up information from one system and carries it to another, not because they're adding judgment, but because the systems aren't connected. Proposal data re-entered from a CRM into a document template. Support ticket details copied into a project record. Invoice line items transferred manually from a project tracker to accounting software. These are the handoffs where agentic AI delivers the fastest, most measurable return, because the work is well-defined, repetitive, and currently consuming hours your team could spend on something harder to automate.

Step 2: Estimate the labor cost of that handoff. Take the weekly hours your team spends on the task, multiply by the fully-loaded hourly cost of the people doing it, and multiply by 50 working weeks. A task consuming 8 hours per week at a $60 fully-loaded hourly rate represents $24,000 in annual operational overhead hidden in a single workflow. Most businesses have three to five workflows in that range. The math tends to be clarifying in a way that vendor demos are not.

Step 3: Map the data the AI would need to automate that handoff. Where does the input data live? Which systems does it touch? What does a successful output look like, and where does that output need to go? ISACA notes that agentic systems excel at specific, well-defined tasks, and that well-defined means the inputs, decision rules, and outputs are documented before the system is built. If you can answer these questions clearly, you're ready to evaluate platforms. If you can't, no platform will solve the problem.

Building an AI strategy always starts here, not with the technology, but with the operational picture that tells you where technology will pay back fastest.

What does agentic AI for business look like in practice?

The use cases that consistently deliver measurable value in small and mid-sized businesses follow a pattern: they involve high-volume, well-defined workflows that currently require human coordination between systems. A few concrete examples:

- Proposal generation: An agent pulls relevant client data from the CRM, pricing from a rate card, and scope language from a template library, assembling a first draft that a human reviews and sends. A task that consumed 90 minutes per proposal runs in under five minutes.

- Support ticket triage: An agent receives incoming requests, classifies them by type and urgency, searches internal documentation for relevant answers, drafts a response, and routes the ticket to the appropriate team member with context attached. The human reviews and approves rather than starting from zero.

- Cross-system status updates: An agent monitors project milestones in the project management tool and automatically updates the CRM record, sends a client-facing status note, and flags any budget variance for the account manager. A task that previously required a weekly manual sync becomes continuous and automatic.

According to PwC's AI agent survey, the most common deployment areas among companies actively using AI agents are customer service (57%), sales and marketing (54%), and IT and cybersecurity (53%). Those aren't arbitrary priorities. They're the functions with the highest-volume, most repetitive handoffs, which is exactly the pattern the diagnostic above is designed to surface.

AI in operations and process improvement follows the same logic: find the repetitive coordination work, map the data, automate the handoff, and measure the hours recovered.

The organizational question that matters more than the technology question

The part of the agentic AI conversation that rarely makes it into vendor materials is the organizational side. The European Data Protection Supervisor describes agentic AI as systems acting autonomously with limited human intervention, and that description raises a question that every business leader should answer before deployment: where does human oversight live, and who is accountable when the system acts incorrectly?

This isn't a reason to slow down. It's a reason to be deliberate. The businesses that deploy agentic AI well build clear escalation paths into the workflow design: the agent handles defined cases autonomously, escalates edge cases to a human, and logs its actions in a way the team can audit. Thomson Reuters emphasizes this in their own deployment philosophy: domain-specific agents refined by subject-matter experts, with human expertise firmly in the loop at the level of oversight and exception handling, not at the level of every routine action.

For your business, the governance question is practical, not philosophical: which decisions should the agent make autonomously, which should it flag for human review, and how will you know if it's making errors? Getting crisp on those three questions before you build is the difference between a system your team trusts and one they quietly route around. Building an AI-ready organization means designing that accountability structure before the tools go live, not after.

Where to go from here

The shift to agentic AI is real, the adoption curve is steep, and the gap between organizations that redesign their workflows and those that layer tools onto broken processes is already starting to show in margin and capacity. You don't need to move fast. You need to move clearly.

If you'd like a structured look at where your business stands, our complimentary AI Readiness Assessment maps your current workflows, identifies the highest-value automation opportunities, and translates the gap into a dollar figure your P&L will recognize. No tools to buy, no platform to evaluate. Just a clear picture of where the leverage is.

Take the Complimentary AI Readiness Assessment