The odds are stacked against your AI pilot. Here's what the small group that beats those odds actually does.

Key takeaways

Research suggests more than 80% of AI projects across organizations of all sizes fail to deliver their intended business value, and SMBs have less financial runway to absorb those failures.

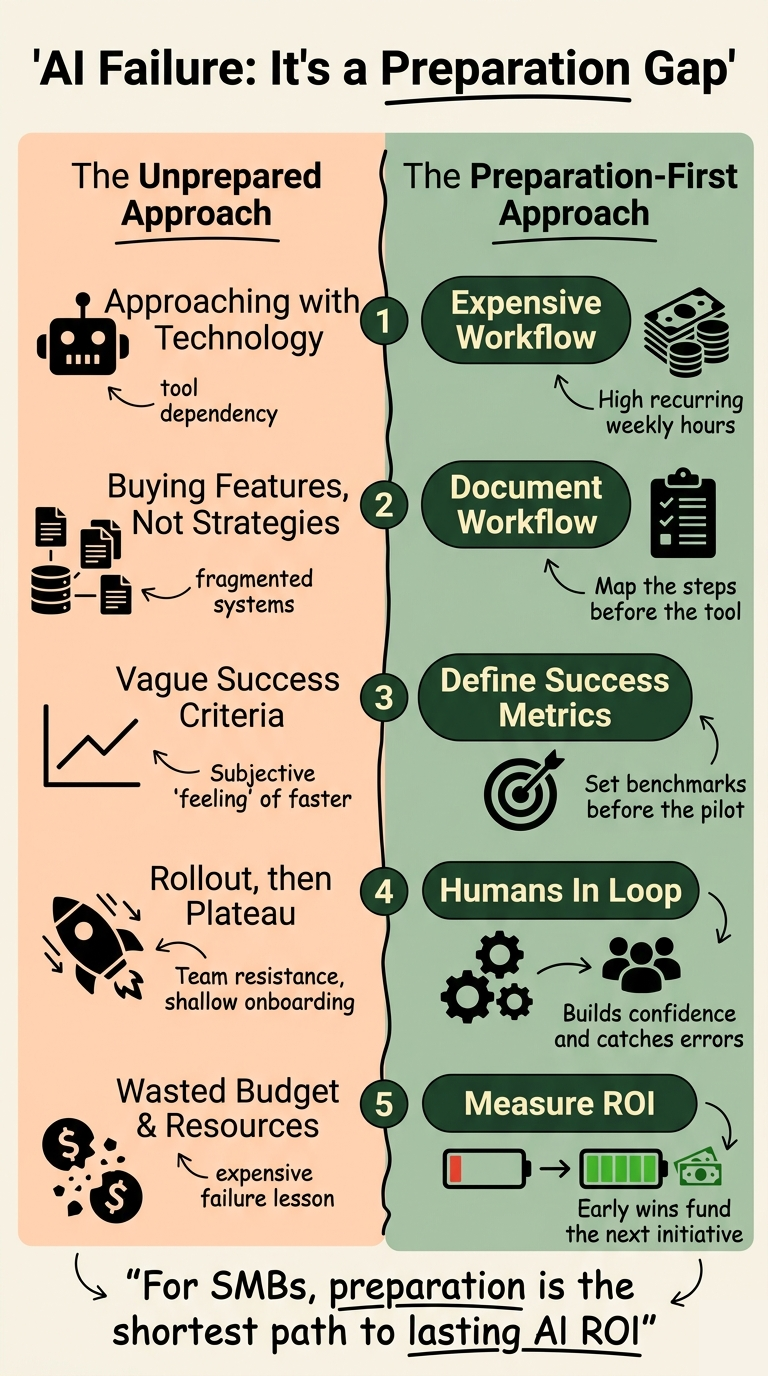

The most common cause isn't the technology. It's starting with a tool instead of a workflow, and measuring the wrong things afterward.

The 5% that succeed share a recognizable pattern: narrow scope, documented workflows, clear ROI targets, fast feedback loops, and human oversight baked in from day one.

Walk into most small businesses right now and you'll find some version of the same scene. Someone on the team is using ChatGPT to draft emails. A manager is testing an AI feature inside the CRM. The owner read something about automation and signed up for a platform demo last Tuesday. There's genuine curiosity, some early experimentation, and a quiet anxiety that if the business doesn't figure this out soon, a competitor will.

Then the pilot stalls. The tool gets underused. Nobody measures anything. Six months later, the conversation starts over with a different product.

This is the pattern behind why AI projects fail for SMBs at such a high rate. It's not a technology problem. It's an approach problem. And the businesses that break the pattern are doing five specific things differently.

Why do so many AI projects fail for SMBs in the first place?

The numbers are sobering. A 2025 RAND Corporation analysis cited by Pendoah found that more than 80% of AI projects across organizations of all sizes fail to deliver their intended business value. A separate S&P Global Market Intelligence survey of over 1,000 organizations found that 42% of companies abandoned most of their AI initiatives in 2025, up from 17% the year before. The average organization scrapped 46% of AI proof-of-concepts before they ever reached production.

For large enterprises, a failed pilot is an expensive lesson. For an SMB, it's often a meaningful chunk of the annual technology budget, plus weeks of staff time that could have gone elsewhere. The stakes are different, which means the approach has to be different too.

There's also a data problem that hits SMBs harder than most people acknowledge. Gartner's 2025 research identifies poor data quality and misaligned objectives as the leading cause of AI project failure, accounting for 85% of cases. Only 12% of organizations report data of sufficient quality for AI applications. That number is almost certainly lower for SMBs, where customer records live in spreadsheets, notes are stored in someone's inbox, and half the institutional knowledge walks out the door when an employee leaves.

The headline that circulated widely, that MIT's research found 95% of organizations see no measurable business return on generative AI spending, generated a lot of anxiety. What it actually describes is a deployment problem: most AI investments never move from pilot to production. The technology works. The path from demo to daily workflow is where projects collapse.

Why what you've already tried probably hasn't worked

If you've invested in AI tools before and felt underwhelmed, you're in good company. The pattern looks roughly like this: a vendor promises time savings, someone on the team runs a test, the test looks promising, rollout happens, adoption plateaus, and within a quarter the tool is quietly deprioritized.

There are a few structural reasons this keeps happening.

The first is starting with the tool instead of the workflow. An AI writing assistant is not a strategy. An AI-powered CRM feature is not an implementation plan. When the selection process starts with "what AI tools should we use" instead of "which workflow is costing us the most in weekly hours," the project is already building on sand. No tool can improve a process that hasn't been mapped.

The second is buying "AI-powered" features inside every existing platform. Most major SaaS products now have an AI layer. Many of them are genuinely useful. But buying AI features inside a disconnected tool stack doesn't solve the underlying data fragmentation. It just adds more cognitive overhead. You end up with AI in five places, none of them talking to each other, and no clearer picture of your operations than you had before.

The third is skipping the measurement design. If you don't define what success looks like before the pilot starts, there's no way to evaluate whether it worked. "The team seems to like it" is not a business case. Neither is "it feels faster." Without baseline data, a clear metric, and a time horizon, you can't build organizational momentum or justify continued investment.

Salesforce's research on SMB AI adoption highlights inadequate post-sales support as a persistent problem as well. AI vendors often lack the bandwidth to deliver consistent service to smaller businesses. You get a strong demo and a shallow onboarding, then you're largely on your own figuring out how to make it work in your actual environment.

The reframe: it's an organizational problem, not a technology problem

Here's the thing that changes how you approach this. AI doesn't fail because it can't do the job. It fails because the job hasn't been clearly defined, the data it needs isn't clean, the team hasn't been prepared, and there's no one accountable for making sure it delivers.

Analysis from PathOpt on the real AI failure rate data makes this point directly: the most common causes of failed pilots are leadership and buy-in issues, strategic planning problems, and a mismatch between AI capabilities and actual business needs. Technical limitations rank lower than most people expect.

What this means for your business is that the preparation work, the workflow mapping, the data hygiene, the success metrics, the change management, is not the boring part you do before the AI project. It is the AI project. The businesses that get lasting results from AI aren't the ones with the most sophisticated models. They're the ones that did the foundational work first.

This also means SMBs have a real advantage over large enterprises in this environment. You can make decisions faster. You can change direction without six layers of approval. You can give an AI agent a narrow scope and iterate on it within days. The 5% who succeed are often smaller, faster-moving businesses that treated the pilot like a business problem, not a technology procurement exercise.

The 5 things the successful 5% do differently

Across the research and implementation patterns that define successful AI adoption in smaller businesses, five behaviors separate the outcomes that compound from the ones that stall.

1. They start with the most expensive workflow, not the most novel use case.

The first question is not "what can AI do?" It's "which recurring task in our business consumes the most labor hours per week?" Proposal generation, support ticket triage, appointment scheduling, data entry between disconnected systems. These aren't glamorous use cases. They're the ones that pay back fastest. A 10-hour-per-week manual process, running across 50 weeks at a fully loaded hourly cost, represents tens of thousands of dollars in recoverable value annually. That's where the ROI math is clearest, and where an AI pilot can demonstrate measurable results within 30 to 90 days.

2. They document the workflow before they touch the technology.

Before any tool is selected, before any vendor is contacted, the successful group can answer these questions in writing: What are the exact steps in this workflow? Who touches it and when? Where does information come from, and where does it go? What does a good output look like? This documentation isn't a bureaucratic exercise. It's the input the AI actually needs to work. You can't automate a process nobody has described.

3. They define success metrics before the pilot starts.

The baseline gets measured first: current processing time, current error rate, current weekly hours consumed. Then the success threshold is set: what number, by what date, would justify moving this to production? This removes the subjective judgment calls that let mediocre pilots drag on indefinitely and lets genuinely good results build real organizational conviction.

4. They keep humans in the loop, especially early.

The businesses that accelerate AI adoption fastest are often the ones that resist the urge to fully automate too quickly. A human review step in the first 90 days serves two purposes: it catches the edge cases and errors that training data didn't anticipate, and it builds team confidence in the system. Staff who are involved in reviewing and correcting AI outputs early become advocates for the technology, not resistors of it. Fear that AI will replace them tends to dissolve when they're the ones teaching it.

5. They measure ROI explicitly and report it visibly.

The 5% treat AI ROI as a business metric on the same level as revenue and margin. Hours reclaimed per week. Reduction in turnaround time. Drop in error rate. They make that number visible to the team and to leadership. This creates a compounding effect: early wins fund the next initiative, and a culture of measurement attracts the kind of talent that builds on it. For more on how to build the conditions for this kind of success, our guide on building an AI strategy that actually works walks through the full decision framework.

What this looks like in practice

Consider a professional services firm processing 40 to 60 client intake forms per week. Each form requires someone to read it, extract key information, cross-reference it with existing client records, and route it to the right team member. It's a 12-minute task done 50 times a week, by a person whose time costs the firm roughly $65 per hour. That's a $650 weekly drag, or about $33,000 annually, sitting inside a single workflow that most firms consider routine overhead.

An AI agent configured with clear intake rules, connected to the firm's CRM, and reviewed by a team member for the first 30 days can typically bring that processing time down by 70% or more. The ROI is measurable within the first month. The team member who was spending 10 hours a week on intake is now spending three, and has the capacity to take on higher-value work. That's the math that builds the business case for the next initiative.

The reason most businesses never get there isn't that the technology isn't capable. It's that they never mapped the workflow, never measured the baseline, and never defined what success looked like before they started. If you want to understand where your own business sits on this spectrum, our guide on AI for operations and process improvement covers how to identify and prioritize the workflows worth automating first. And if you're thinking about what the broader organizational conditions for success look like, building an AI-ready organization covers the 12-month approach in detail.

Is your business set up to be in the 5%?

The gap between businesses that are compounding AI gains and businesses that are running the same failed pilot for the third time is not a technology gap. It's a preparation gap. The five behaviors above aren't complicated. They don't require a large budget or a technical team. They require clarity about where the real cost is, honesty about what the data actually looks like, and discipline about measuring what matters.

If you've tried AI before and it didn't deliver, that experience isn't a verdict on whether AI can work for your business. It's information about where the process broke down. The fix is usually upstream of the tool.

Our complimentary AI Readiness Assessment is the fastest way to find out which workflow in your business has the highest recoverable value, where your data and team readiness actually stand, and what a realistic first initiative looks like sized to your budget. It translates your specific operations into a dollar figure: annual productivity waste, revenue opportunity from unlocked capacity, and a clear starting point. Take the Complimentary Readiness Assessment and find out what your business is leaving on the table.