Building an AI roadmap in a regulated industry isn't a slower version of building one anywhere else. It's a fundamentally different exercise, and the teams that treat it as the former rarely finish.

Key takeaways

- Governance precedes deployment: In regulated environments, an AI roadmap that doesn't begin with a compliance and risk-classification layer will stall at the first audit, not the last.

- The real blocker is usually organizational, not technical: Research from McKinsey's 2025 State of AI survey found that nearly two-thirds of organizations haven't begun scaling AI across the enterprise, and the leading causes point to governance gaps, not model limitations.

- Shadow AI is already inside your walls: A BlackFog compliance survey found that 49% of employees use AI tools at work that aren't sanctioned by their employer, including in industries with strict data-handling rules.

In 1921, Henry Ford faced a crisis that had nothing to do with his assembly lines. His organization had grown faster than its information systems could manage, and decisions that should have taken days were taking weeks. The machines were ready. The organizational design wasn't.

A century later, the same dynamic is playing out in hospitals, financial institutions, insurance firms, and government agencies investing in AI. The models are capable. The infrastructure is available. But the roadmap, the actual sequenced plan for how AI gets embedded into a compliance-dense, audit-heavy operating environment, is either missing entirely or borrowed from a software company that has never seen a regulatory examination.

If you're leading AI adoption inside a regulated business, you've probably already felt this. The question isn't whether AI can help. It clearly can. The FDA authorized a record 295 AI/ML medical device clearances in 2025 alone, and financial regulators across the globe are actively updating frameworks to accommodate algorithmic decision-making. The question is how you build a roadmap that moves fast enough to matter and carefully enough to survive the environment it's operating in.

Why the standard AI playbook breaks down in regulated settings

The canonical enterprise AI playbook, identify a use case, run a pilot, measure results, scale, was designed for environments where the cost of a mistake is a missed metric. In a regulated industry, the cost of a mistake can be a consent decree, a license suspension, or a HIPAA breach notification to 50,000 patients.

That's not a reason to move slowly for its own sake. It's a reason to build the roadmap differently from the first step.

According to the ITU's Annual AI Governance Report 2025, the regulatory landscape demands proactive and adaptive governance structures, not reactive compliance patches applied after deployment. The EU's risk-based framework, now in active force, classifies AI deployed in healthcare, employment, education, and law enforcement as high-risk, with full compliance requirements expected by August 2026. In the U.S., the picture is more decentralized: White & Case's global regulatory tracker notes that the country still relies primarily on existing sector-specific federal law rather than a single AI statute, which means your compliance obligations depend heavily on which agency has jurisdiction over your specific use case.

For your business, this fragmentation is both a challenge and an opportunity. There's no single rulebook to follow, but there's also no single regulator watching everything at once. The teams that win build a roadmap organized around their specific regulatory exposure, not around a generic AI framework borrowed from an industry with different risk profiles.

What most regulated-industry AI pilots get wrong

The first approach I see most often is what I'd call the governance-last pattern. A team identifies a genuinely valuable use case, builds a working prototype, demonstrates real efficiency gains, and then, three weeks before planned rollout, sends it to legal and compliance for review. The reviewers find problems. The problems require rework. The rework takes longer than the original build. The pilot misses its deadline, loses its executive sponsor, and joins what a lot of organizations quietly call their archive of promising ideas that never launched.

This isn't a story about compliance being obstructionist. It's a story about sequencing. Governance inserted at the end of a build cycle is governance that can only say no. Governance built into the design phase is governance that can help you find the version of the idea that actually ships.

The second approach that consistently falls short is the tool-first strategy: identify an AI platform that looks promising, purchase licenses or access, and then figure out which workflows to apply it to. This pattern is surprisingly common, partly because vendors are skilled at making their tools feel like the answer before the question has been properly asked. According to RTS Labs' 2026 enterprise AI research, only 34% of enterprises report that their AI programs produce measurable financial impact, and fewer than 20% have mature governance frameworks in place. The two findings are related: without a governance foundation, you can't measure what AI is actually doing inside a regulated workflow, and without measurement, you can't demonstrate compliance or business value to the people who control both your budget and your operating license.

A third approach that sounds reasonable but tends to collapse under its own weight is the comprehensive-framework strategy: spend six to twelve months building a complete AI policy, governance committee, risk register, and vendor evaluation rubric before touching a single live workflow. I understand the instinct. In a regulated environment, getting the foundation right feels like the responsible choice. But frameworks built without any contact with real implementation decisions tend to be abstract in exactly the places where specificity matters most. By the time the framework is finalized, the regulatory landscape has shifted, the technology has moved, and the business has either lost patience or started running unsanctioned pilots anyway. That last outcome, the unsanctioned pilots, brings us to the problem hiding underneath all three approaches.

The constraint nobody names: shadow AI is already inside your compliance perimeter

Here's the finding from the research that I think deserves more attention in regulated-industry conversations: according to a BlackFog survey on AI compliance, 49% of employees are already using AI tools at work that haven't been sanctioned by their employer. These tools are frequently being used with sensitive or confidential data, in industries where data-handling rules carry legal weight.

Your AI governance problem isn't coming. It's already here.

This reframes the entire roadmap question. The choice your organization faces isn't between "deploying AI" and "not deploying AI." It's between deploying AI with a governance structure and deploying AI without one. The second option is already happening. The roadmap you build isn't enabling AI adoption; it's bringing the adoption that's already occurring inside a structure that can protect the business and deliver consistent value.

For your team, this means the urgency calculation changes. The question isn't whether you're ready to introduce AI. It's whether you're ready to govern the AI your people are already using.

How to build an AI roadmap that regulated industries can actually execute

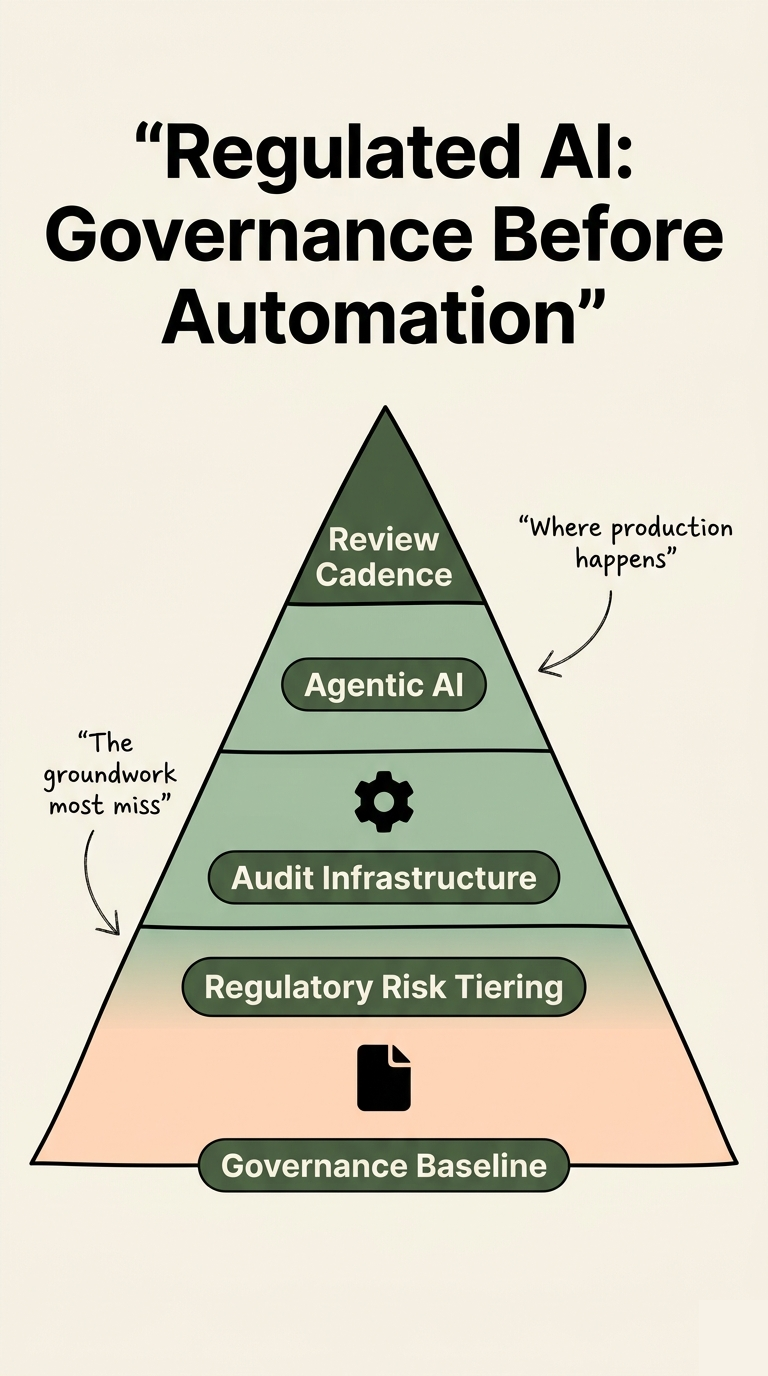

The frameworks that work in regulated environments share a common structure. They're phased, risk-anchored, and built with compliance as a design input rather than a review step. Here's how I'd sequence the work, drawing on the ISO 42001-aligned governance roadmap from Schellman, the CEIMIA sector-specific roadmaps released in late 2025, and the NIST AI Risk Management Framework.

Phase 1: Map your regulatory exposure before you map your use cases

Before you identify a single workflow to automate, you need a clear picture of which regulations govern which parts of your business and what those regulations require of any AI system operating in those areas. This is not a legal review exercise. It's a scoping exercise that determines which AI use cases are straightforward, which are high-risk and require additional controls, and which are effectively off-limits under current frameworks.

- Identify applicable regulations by use case category: HIPAA and FDA guidance if you're in healthcare, SEC and FINRA rules if you're in financial services, state-level requirements if you're operating in multiple jurisdictions. The National Conference of State Legislatures tracks active AI legislation, which is essential reading if your customer base crosses state lines.

- Classify your intended AI use cases by risk level: Not every workflow carries the same regulatory weight. An AI system that drafts internal meeting summaries is categorically different from one that informs a credit decision or a clinical recommendation. Treat them differently from day one.

- Assign ownership before the build starts: Every AI initiative needs a named owner in legal, compliance, or risk who is involved in design decisions, not just final review. This single structural choice prevents most of the governance-last failures described above.

For your organization, this phase typically takes two to four weeks for a focused team. The output isn't a comprehensive policy document. It's a one-page risk map that tells every stakeholder which use cases are in which category, and what the approval path looks like for each.

What does an AI governance structure actually need to include?

The Databricks practical governance framework identifies the components that consistently appear in enterprise AI governance structures that hold up under scrutiny. I'd adapt them for a regulated-industry context as follows:

- Model documentation standards: Every AI system in a regulated workflow needs a model card or equivalent, capturing what the system does, what data it was trained on, what it was tested against, and where its known limitations are. This isn't optional when an auditor asks.

- Human-in-the-loop specifications: DataMotion's research on agentic AI in regulated industries cites a survey finding that 72% of decision-makers in regulated sectors name security and privacy as their top AI concerns. The practical response is explicit documentation of where human review is required before an AI-assisted decision is acted upon, and what the escalation path looks like when the AI produces an output that falls outside defined parameters.

- Access control and data handling protocols: A Knostic governance statistics roundup reports that 97% of AI-related breach victims lacked proper access controls. In a regulated environment, this finding should anchor your data governance work as a prerequisite to deployment, not a parallel track.

- Continuous monitoring and drift detection: A model that performs accurately at deployment can degrade over time as the underlying data distribution shifts. In regulated industries, that degradation can produce discriminatory outcomes, incorrect recommendations, or compliance violations before anyone notices. Monitoring needs to be built into the operating model, not treated as a post-launch enhancement.

How do you sequence use cases to build momentum without creating regulatory exposure?

The sequencing principle I'd recommend is: start with internal-facing, low-risk workflows that sit entirely within your existing data governance perimeter. AI systems that summarize internal documents, route support tickets, or surface context for human decision-makers generate real efficiency without triggering the same regulatory review requirements as customer-facing or consequential-decision systems.

This isn't timidity. It's compound interest. A successful internal automation that saves a team eight hours per week produces two things simultaneously: measurable ROI that justifies the next investment, and organizational confidence that reduces the fear-driven resistance that kills most regulated-industry AI programs before they reach scale.

The pattern behind most AI project failures isn't technical. It's a mismatch between the scope of the first initiative and the organization's actual readiness to absorb change. In regulated industries, that mismatch is amplified because the cost of a failed initiative isn't just a missed business target. It's reputational exposure with the regulators who govern your operating license.

Phase 3: Build toward certification, not just compliance

There's a meaningful difference between being compliant and being certifiably trustworthy, and in regulated industries, the latter is increasingly what customers, partners, and regulators want to see.

ISO 42001, the emerging international standard for AI management systems, provides a certification pathway that functions similarly to ISO 27001 for information security: a structured, auditable management system that gives external parties a credible basis for trusting your AI governance claims. According to Schellman's ISO 42001 roadmap guidance, organizations that align their AI governance work to this standard from the beginning, rather than retrofitting compliance later, are significantly better positioned for both regulatory scrutiny and third-party assurance requests.

For your roadmap, this means designing your governance documentation, your risk registers, and your model review processes with certification readiness in mind from phase one. The incremental cost is low. The credibility it generates with regulators, enterprise customers, and your own board is substantial.

This is also the stage where strong governance starts functioning as a competitive advantage rather than a cost center. BlackFog's compliance research makes the point directly: firms that embed security, accountability, and transparency into their AI deployments are better positioned to win in markets where trust is a purchasing criterion. In regulated industries, trust is always a purchasing criterion.

The governance and data foundations that make the roadmap executable

A roadmap is only as executable as the organizational infrastructure underneath it. Two foundations determine whether a regulated-industry AI roadmap stays on paper or actually moves.

The first is data readiness. AI systems in regulated environments require clean, governed, auditable data. If your customer records are split across three systems that don't communicate, or if your data lineage documentation doesn't exist, the AI models you deploy will inherit those problems and amplify them. Building an AI-ready organization requires resolving data infrastructure questions before they become model performance questions.

The second is organizational design. GrowthUp Partners' synthesis of 20 AI risk studies concludes that most AI risk arises from human behavior and institutional design, not autonomous systems. The bias, the accountability gaps, the opaque decision chains that regulators worry about are organizational problems that happen to involve AI, not AI problems that happen to affect organizations. Building your roadmap around this reality, designing the governance structure first and the technology layer second, is the single most consequential structural choice you'll make.

If you're looking for a concrete starting framework, the guide to building an AI strategy covers the organizational design work that should precede any regulated-industry deployment. And for teams already running workflows they'd like to systematically improve with AI, the AI operations and process improvement framework provides a workflow-level diagnostic that integrates cleanly with the governance structure described here.

What this means for where you start

Building an AI roadmap for a regulated industry isn't harder than building one for an unregulated environment. It's more structured. The compliance requirements, the risk classifications, the documentation standards, those aren't obstacles layered on top of the real work. They're the architecture the real work gets built inside.

The organizations I see making consistent progress share three characteristics. They started with a risk map rather than a use-case wishlist. They built governance as a design input rather than a review step. And they chose a first use case small enough to execute cleanly but meaningful enough to generate internal credibility for everything that came next.

If your organization is still in the planning stage, the most valuable 90 minutes you can spend right now isn't evaluating AI platforms. It's mapping which of your existing workflows carry the highest regulatory sensitivity, and which carry the lowest. That map is the first artifact of a regulated-industry AI roadmap that can actually survive contact with your operating environment.

Take the next step

If you're building an AI roadmap inside a regulated environment and want a structured starting point, our complimentary AI Readiness Assessment identifies your highest-value automation opportunities, flags the workflows that carry the most compliance sensitivity, and produces a prioritized roadmap sized to your organization's actual readiness. No vendor pitch, no generic framework. A diagnostic built around your specific business.

Take the Complimentary Readiness Assessment